I figured that silicone would be an easy place to start as it was a forgiving material.

This is an outline of the technique that I developed. It will allow you to make rubber versions of any object that you want (within reason).

This is not a lesson in part or mold design. Im sure other people have covered that in more detail than I can.

This post will go through the process of making a squishy silicone toy Kia Soul.

Preparation

To get started with this technique you will need:

- silicone rubber (I used this trial kit from BBDINO)

- mixing cups

- vaseline

- rubber bands

- a 3D printer

- 3D CAD

Things I found to be useful as well:

- silicone rubber dye

- x-acto knife or medical scissors (to cleanup the flashing)

- syringe (to apply the vaseline)

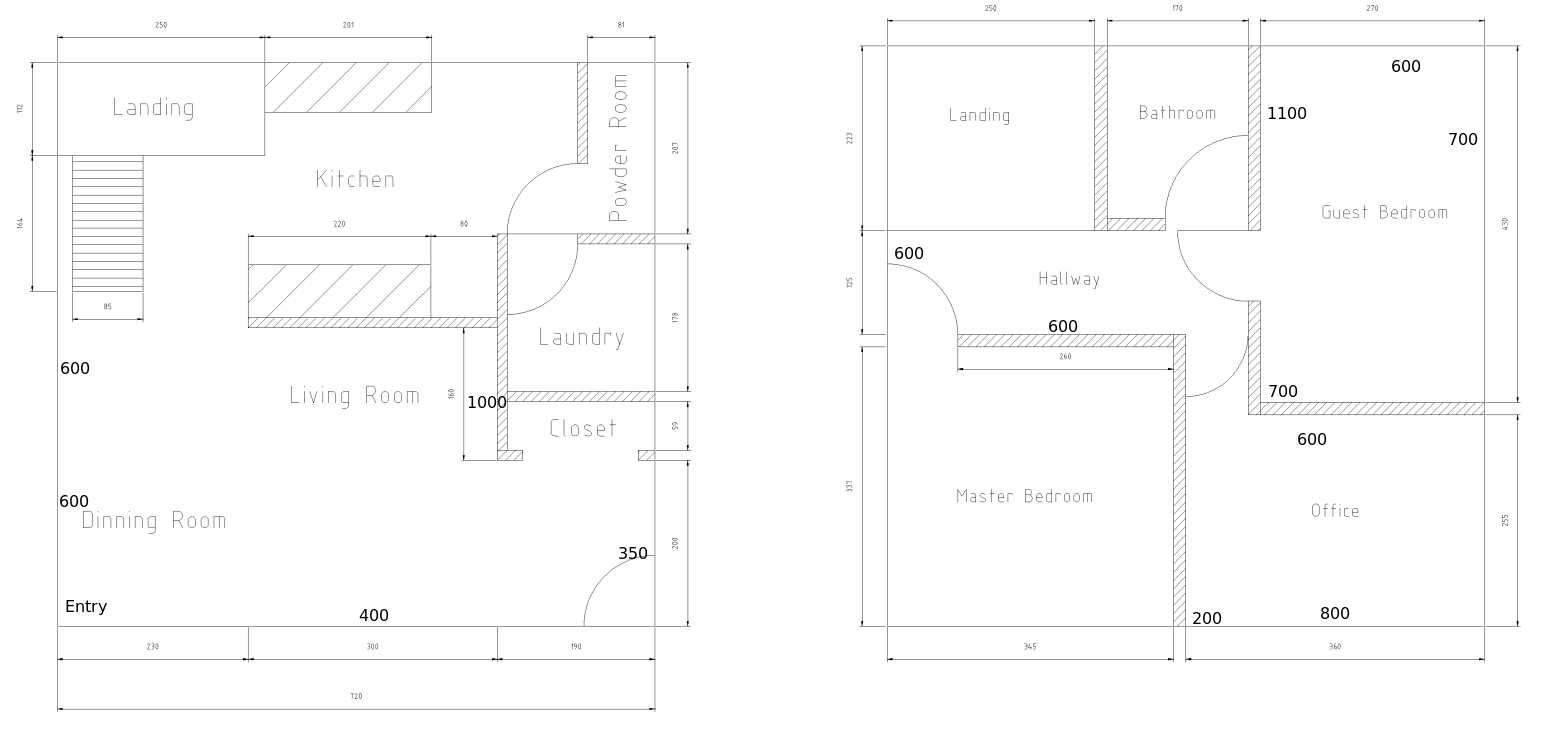

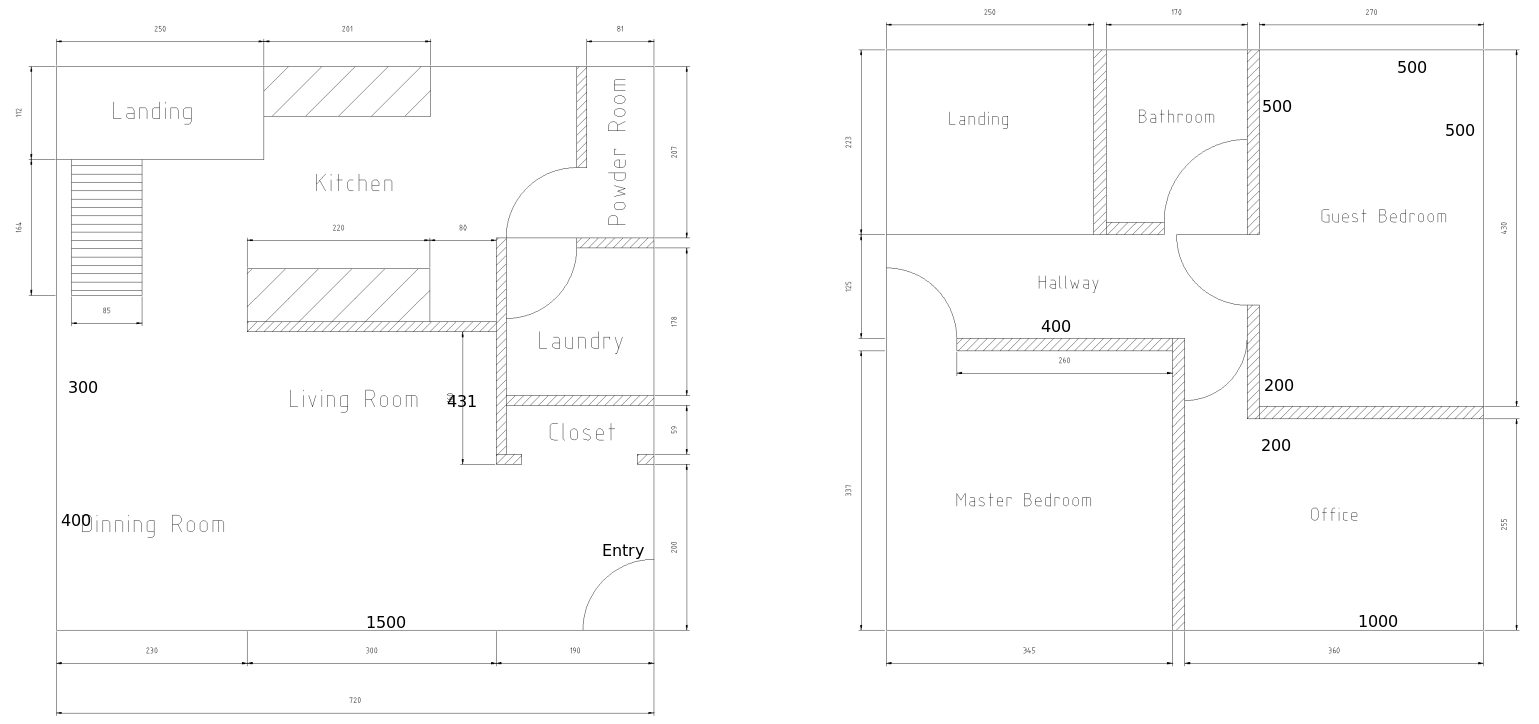

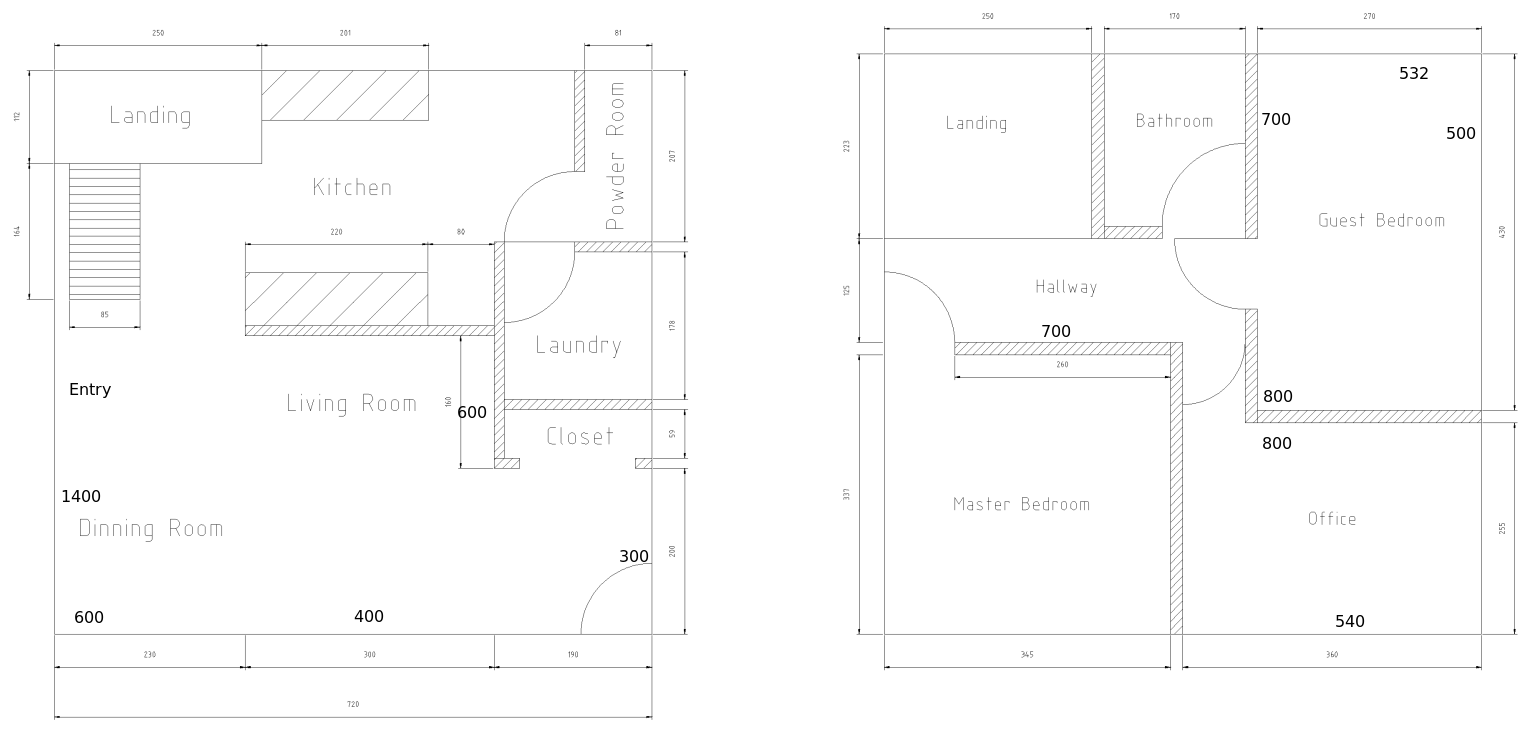

Mold Design

The first part to any mold project is figuring out what you want to make. Make sure to visualize the object forming and coming out of the mold. Silicone is pretty forgiving so things like draft angles are not a huge concern but they merit some thought. Ask yourself:

- How many parts make up the mold?

- How will I pour the liquid in to fill it?

- How will bubbles escape?

- How will I remove the solid part?

I decided that a two part mold with a pour opening in the top fits the needs of this project.

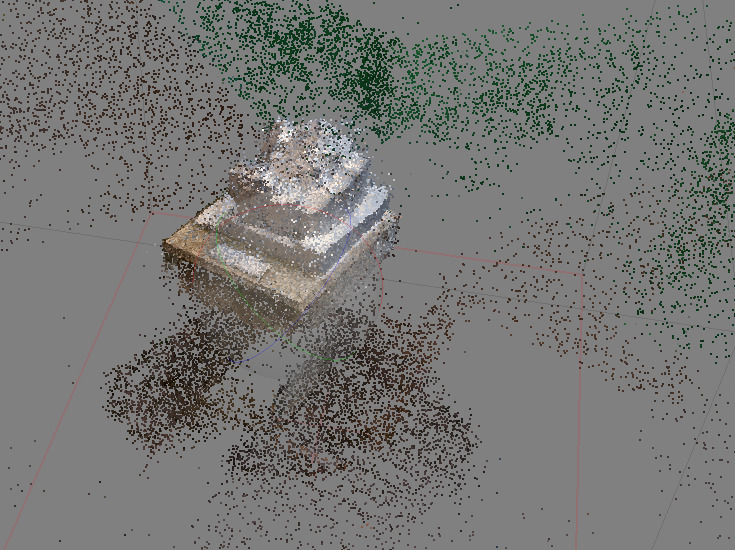

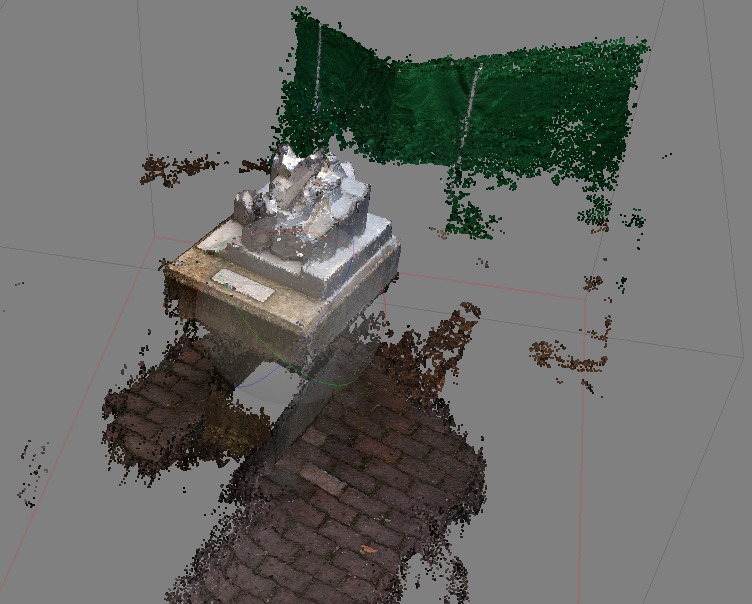

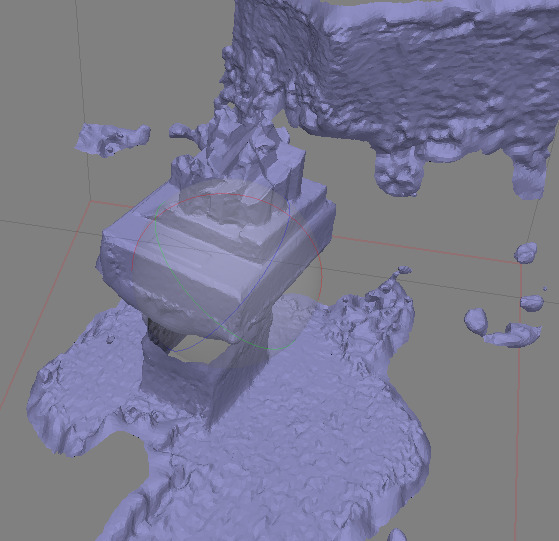

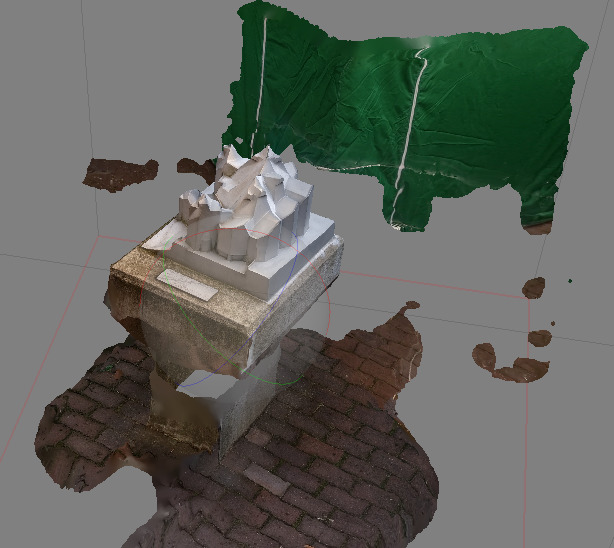

I am going to make this Kia Soul model that I have prepared. Using Blender I remeshed it,

removing and inward facing angles that would make taking it out of the mold more difficult.

For molds, I use my own CAD suite called DSLCAD. The goal is to create a two part mold that I can pour the silicone in from the top.

DSLCAD is a parametric CAD tool that uses a programming language to create models. This is the source code needed to create the mold halves:

|

After rendering this model you will get a 3MF file with two mold halves. Any 3D slicer should be able to handle that file and send it to your printer.

The first half has a groove.

The second half has a tongue that fits into the groove. This helps keep the silicone from leaking out.

As you can see, both sides should be 3D printable. I oriented on their side with the cavity facing upwards to avoid printing overhangs.

There is a bit of overlap near the car tires that could be a problem when releasing the part from the mold. We are using 20A silicone, it bends enough that pulling the part out will be possible. However, if you are using a harder silicone, try to avoid trapped angles like these.

![]()

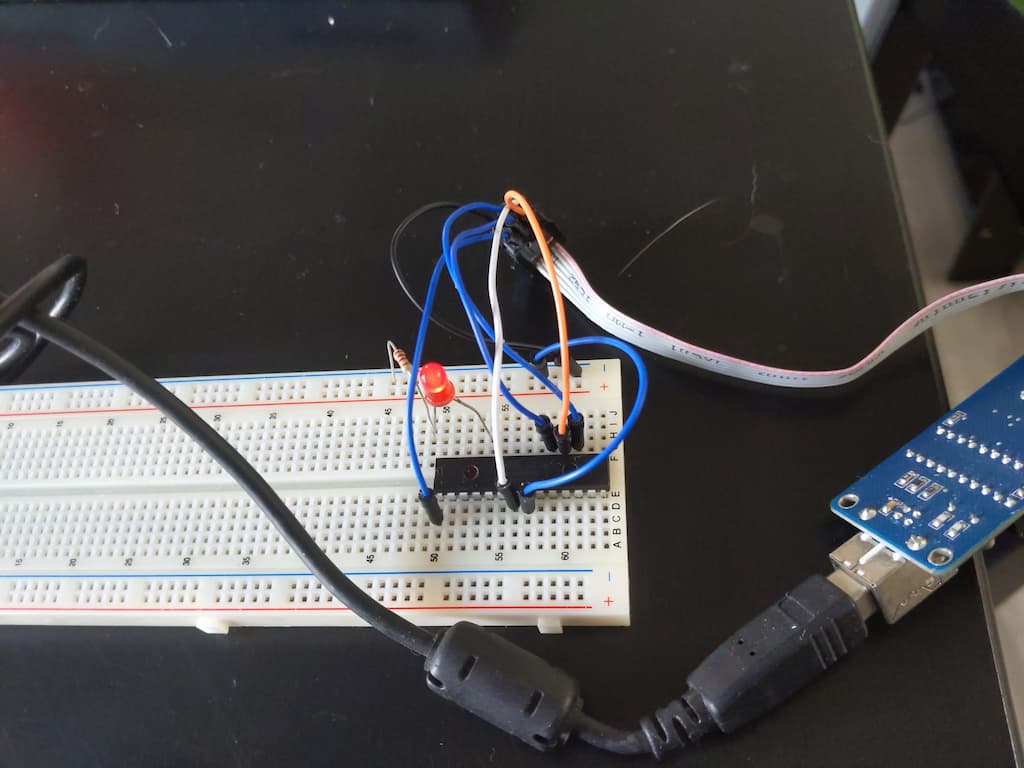

Casting

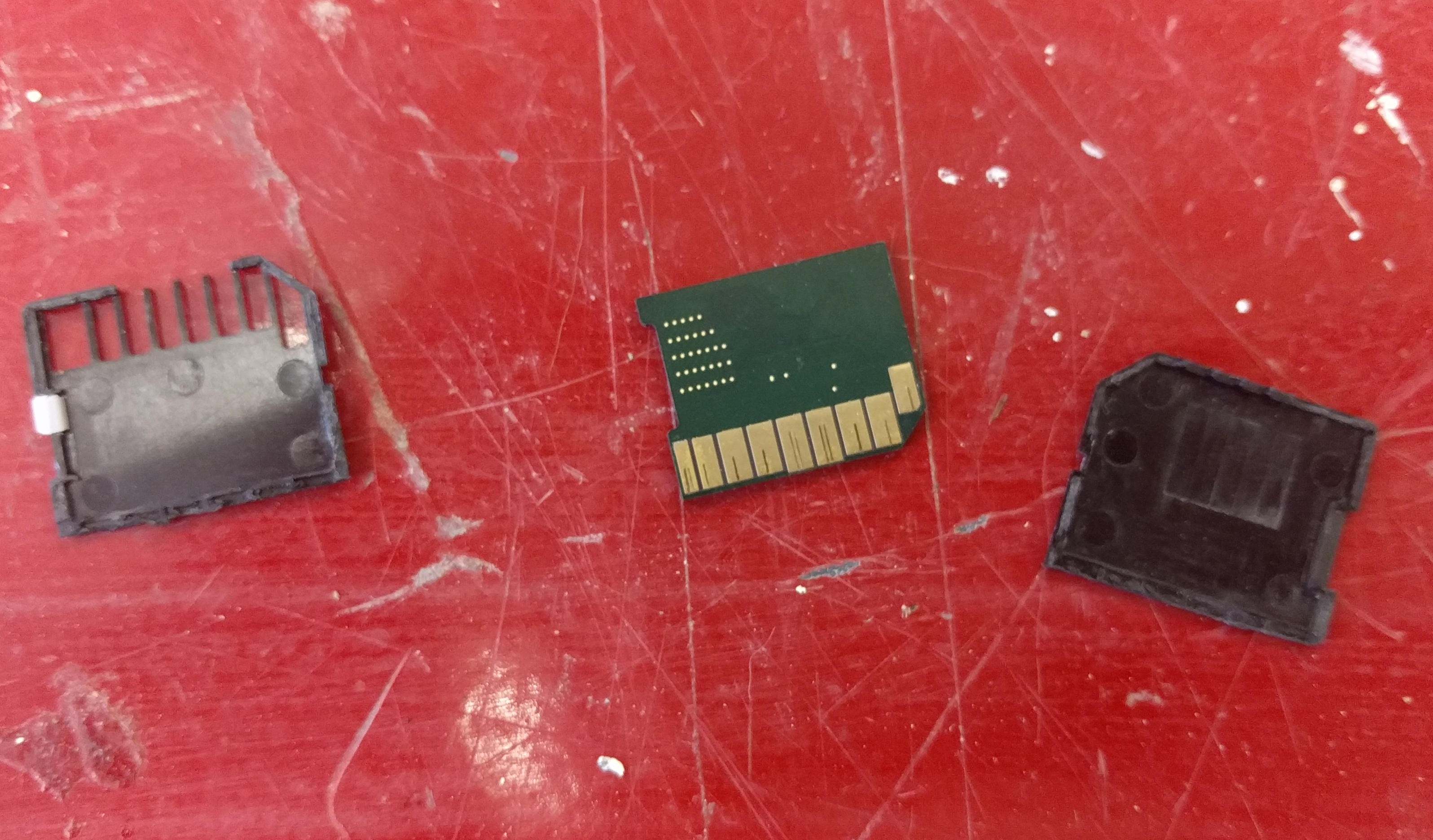

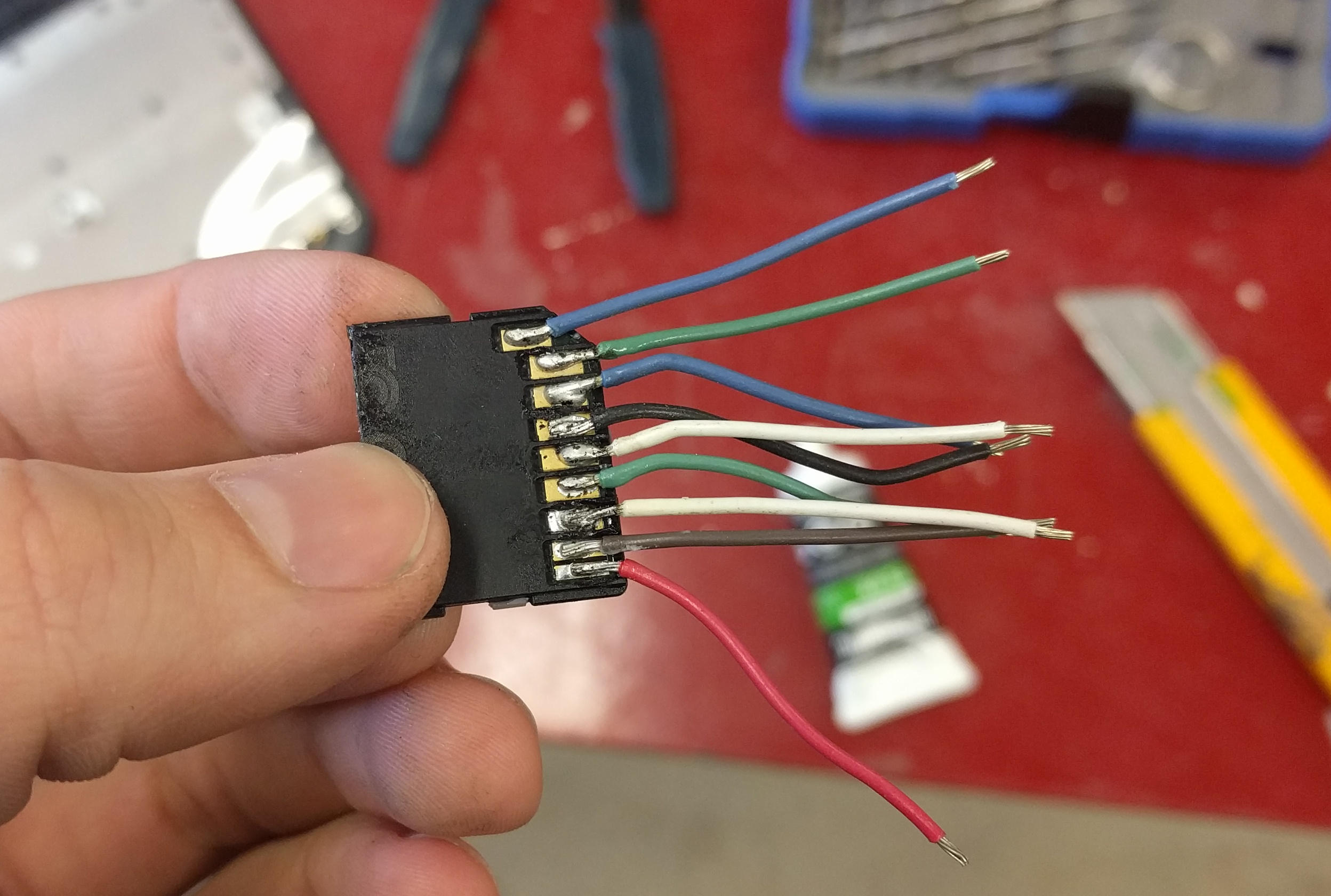

Next we need to prep the mold for casting. 3D printing is not perfect so if we pour silicone directly into the mold it will leak out. The tongue and groove system helps but we need to seal it more. I find vaseline is a great sealant for this. Using a syringe (or whatever you have on hand) put some vaseline into the groove all along one side of the mold.

![]()

Close the two halves and open them again. Clean off any extra vaseline that may have gone into the inside of the mold. The silicone will flow around any extra vaseline and it will leave defects in the final part.

![]()

Close the halves again and secure them together using rubber bands.

![]()

Mix the silicone following the manufacturers instructions. For mine it asks for 1:1 by volume of both parts. I also add a little silicone dye for color. Make sure to mix well before pouring.

Pour the silicone slowly through the hole at the top. Try to get a slow but even pour with a long thin line of silicone flowing from your cup. This line helps keep bubbles out of the final product.

![]()

Wait until the silicone has hardened. The silicone I am using asks for 24 hours but I can usually remove the parts after 12 hours.

Conclusion

By this point you should be able to pull the mold halves apart and free the item inside. There will be a little flashing around the seam that you can cut off.

![]()

Now you have a new squishy Kia Soul. As you can see there is a large bubble at the top near the pour hole in the mold. This is because I didn’t make the opening large enough and air got trapped. This can be easily adjusted in the CAD model.

![]()

I hope that this process was informative. There are a few things that I consider doing to improve in the future:

- Larger pour opening to avoid trapping bubbles.

- Use a vacuum chamber to remove more air from the silicone.

- Sanding the mold to remove 3D printer layer lines.

- Pouring in multiple passes to make multi colored items.

If you make anything with this method or have suggestions to improve it please reach out and let me know.

]]>